Caching is typically the most effective way to boost an application's performance.

For dynamic websites, when rendering a template, you'll often have to gather data from various sources (like a database, the file system, and third-party APIs, to name a few), process the data, and apply business logic to it before serving it up to a client. Any delay due to network latency will be noticed by the end user.

For instance, say you have to make an HTTP call to an external API to grab the data required to render a template. Even in perfect conditions this will increase the rendering time which will increase the overall load time. What if the API goes down or maybe you're subject to rate limiting? Either way, if the data is infrequently updated, it's a good idea to implement a caching mechanism to prevent having to make the HTTP call altogether for each client request.

This article looks at how to do just that by first reviewing Django's caching framework as a whole and then detailing step-by-step how to cache a Django view.

Dependencies:

- Django v3.2.5

- django-redis v5.0.0

- Python v3.9.6

- pymemcache v3.5.0

- Requests v2.26.0

--

Django Caching Articles:

- Caching in Django (this article!)

- Low-Level Cache API in Django

Contents

Objectives

By the end of this tutorial, you should be able to:

- Explain why you may want to consider caching a Django view

- Describe Django's built-in options available for caching

- Cache a Django view with Redis

- Load test a Django app with Apache Bench

- Cache a Django view with Memcached

Django Caching Types

Django comes with several built-in caching backends, as well as support for a custom backend.

The built-in options are:

- Memcached: Memcached is a memory-based, key-value store for small chunks of data. It supports distributed caching across multiple servers.

- Database: Here, the cache fragments are stored in a database. A table for that purpose can be created with one of the Django's admin commands. This isn't the most performant caching type, but it can be useful for storing complex database queries.

- File system: The cache is saved on the file system, in separate files for each cache value. This is the slowest of all the caching types, but it's the easiest to set up in a production environment.

- Local memory: Local memory cache, which is best-suited for your local development or testing environments. While it's almost as fast as Memcached, it cannot scale beyond a single server, so it's not appropriate to use as a data cache for any app that uses more than one web server.

- Dummy: A "dummy" cache that doesn't actually cache anything but still implements the cache interface. It's meant to be used in development or testing when you don't want caching, but do not wish to change your code.

Django Caching Levels

Caching in Django can be implemented on different levels (or parts of the site). You can cache the entire site or specific parts with various levels of granularity (listed in descending order of granularity):

- Per-site cache

- Per-view cache

- Template fragment cache

- Low-level cache API

Per-site cache

This is the easiest way to implement caching in Django. To do this, all you'll have to do is add two middleware classes to your settings.py file:

MIDDLEWARE = [

'django.middleware.cache.UpdateCacheMiddleware', # NEW

'django.middleware.common.CommonMiddleware',

'django.middleware.cache.FetchFromCacheMiddleware', # NEW

]

The order of the middleware is important here.

UpdateCacheMiddlewaremust come beforeFetchFromCacheMiddleware. For more information take a look at Order of MIDDLEWARE from the Django docs.

You then need to add the following settings:

CACHE_MIDDLEWARE_ALIAS = 'default' # which cache alias to use

CACHE_MIDDLEWARE_SECONDS = '600' # number of seconds to cache a page for (TTL)

CACHE_MIDDLEWARE_KEY_PREFIX = '' # should be used if the cache is shared across multiple sites that use the same Django instance

Although caching the entire site could be a good option if your site has little or no dynamic content, it may not be appropriate to use for large sites with a memory-based cache backend since RAM is, well, expensive.

Per-view cache

Rather than wasting precious memory space on caching static pages or dynamic pages that source data from a rapidly changing API, you can cache specific views. This is the approach that we'll use in this article. It's also the caching level that you should almost always start with when looking to implement caching in your Django app.

You can implement this type of cache with the cache_page decorator either on the view function directly or in the path within URLConf:

from django.views.decorators.cache import cache_page

@cache_page(60 * 15)

def your_view(request):

...

# or

from django.views.decorators.cache import cache_page

urlpatterns = [

path('object/<int:object_id>/', cache_page(60 * 15)(your_view)),

]

The cache itself is based on the URL, so requests to, say, object/1 and object/2 will be cached separately.

It's worth noting that implementing the cache directly on the view makes it more difficult to disable the cache in certain situations. For example, what if you wanted to allow certain users access to the view without the cache? Enabling the cache via the URLConf provides the opportunity to associate a different URL to the view that doesn't use the cache:

from django.views.decorators.cache import cache_page

urlpatterns = [

path('object/<int:object_id>/', your_view),

path('object/cache/<int:object_id>/', cache_page(60 * 15)(your_view)),

]

Template fragment cache

If your templates contain parts that change often based on the data you'll probably want to leave them out of the cache.

For example, perhaps you use the authenticated user's email in the navigation bar in an area of the template. Well, If you have thousands of users then that fragment will be duplicated thousands of times in RAM, one for each user. This is where template fragment caching comes into play, which allows you to specify the specific areas of a template to cache.

To cache a list of objects:

{% load cache %}

{% cache 500 object_list %}

<ul>

{% for object in objects %}

<li>{{ object.title }}</li>

{% endfor %}

</ul>

{% endcache %}

Here, {% load cache %} gives us access to the cache template tag, which expects a cache timeout in seconds (500) along with the name of the cache fragment (object_list).

Low-level cache API

For cases where the previous options don't provide enough granularity, you can use the low-level API to manage individual objects in the cache by cache key.

For example:

from django.core.cache import cache

def get_context_data(self, **kwargs):

context = super().get_context_data(**kwargs)

objects = cache.get('objects')

if objects is None:

objects = Objects.all()

cache.set('objects', objects)

context['objects'] = objects

return context

In this example, you'll want to invalidate (or remove) the cache when objects are added, changed, or removed from the database. One way to manage this is via database signals:

from django.core.cache import cache

from django.db.models.signals import post_delete, post_save

from django.dispatch import receiver

@receiver(post_delete, sender=Object)

def object_post_delete_handler(sender, **kwargs):

cache.delete('objects')

@receiver(post_save, sender=Object)

def object_post_save_handler(sender, **kwargs):

cache.delete('objects')

For more on using database signals to invalidate cache, check out the Low-Level Cache API in Django article.

With that, let's look at some examples.

Project Setup

Clone down the base project from the cache-django-view repo, and then check out the base branch:

$ git clone https://github.com/testdrivenio/cache-django-view.git --branch base --single-branch

$ cd cache-django-view

Create (and activate) a virtual environment and install the requirements:

$ python3.9 -m venv venv

$ source venv/bin/activate

(venv)$ pip install -r requirements.txt

Apply the Django migrations, and then start the server:

(venv)$ python manage.py migrate

(venv)$ python manage.py runserver

Navigate to http://127.0.0.1:8000 in your browser of choice to ensure that everything works as expected.

You should see:

Take note of your terminal. You should see the total execution time for the request:

Total time: 2.23s

This metric comes from core/middleware.py:

import logging

import time

def metric_middleware(get_response):

def middleware(request):

# Get beginning stats

start_time = time.perf_counter()

# Process the request

response = get_response(request)

# Get ending stats

end_time = time.perf_counter()

# Calculate stats

total_time = end_time - start_time

# Log the results

logger = logging.getLogger('debug')

logger.info(f'Total time: {(total_time):.2f}s')

print(f'Total time: {(total_time):.2f}s')

return response

return middleware

Take a quick look at the view in apicalls/views.py:

import datetime

import requests

from django.views.generic import TemplateView

BASE_URL = 'https://httpbin.org/'

class ApiCalls(TemplateView):

template_name = 'apicalls/home.html'

def get_context_data(self, **kwargs):

context = super().get_context_data(**kwargs)

response = requests.get(f'{BASE_URL}/delay/2')

response.raise_for_status()

context['content'] = 'Results received!'

context['current_time'] = datetime.datetime.now()

return context

This view makes an HTTP call with requests to httpbin.org. To simulate a long request, the response from the API is delayed for two seconds. So, it should take about two seconds for http://127.0.0.1:8000 to render not only on the initial request, but for each subsequent request as well. While a two second load is somewhat acceptable on the initial request, it's completely unacceptable for subsequent requests since the data is not changing. Let's fix this by caching the entire view using Django's Per-view cache level.

Workflow:

- Make full HTTP call to httpbin.org on the initial request

- Cache the view

- Subsequent requests will then pull from the cache, bypassing the HTTP call

- Invalidate the cache after a period of time (TTL)

Baseline Performance Benchmark

Before adding cache, let's quickly run a load test to get a benchmark baseline using Apache Bench, to get rough sense of how many requests our application can handle per second.

Apache Bench comes pre-installed on Mac.

If you're on a Linux system, chances are it's already installed and ready to go as well. If not, you can install via APT (

apt-get install apache2-utils) or YUM (yum install httpd-tools).Windows users will need to download and extract the Apache binaries.

Add Gunicorn to the requirements file:

gunicorn==20.1.0

Kill the Django dev server and install Gunicorn:

(venv)$ pip install -r requirements.txt

Next, serve up the Django app with Gunicorn (and four workers) like so:

(venv)$ gunicorn core.wsgi:application -w 4

In a new terminal window, run Apache Bench:

$ ab -n 100 -c 10 http://127.0.0.1:8000/

This will simulate 100 connections over 10 concurrent threads. That's 100 requests, 10 at a time.

Take note of the requests per second:

Requests per second: 1.69 [#/sec] (mean)

Keep in mind that Django Debug Toolbar will add a bit of overhead. Benchmarking in general is difficult to get perfectly right. The important thing is consistency. Pick a metric to focus on and use the same environment for each test.

Kill the Gunicorn server and spin the Django dev server back up:

(venv)$ python manage.py runserver

With that, let's look at how to cache a view.

Caching a View

Start by decorating the ApiCalls view with the @cache_page decorator like so:

import datetime

import requests

from django.utils.decorators import method_decorator # NEW

from django.views.decorators.cache import cache_page # NEW

from django.views.generic import TemplateView

BASE_URL = 'https://httpbin.org/'

@method_decorator(cache_page(60 * 5), name='dispatch') # NEW

class ApiCalls(TemplateView):

template_name = 'apicalls/home.html'

def get_context_data(self, **kwargs):

context = super().get_context_data(**kwargs)

response = requests.get(f'{BASE_URL}/delay/2')

response.raise_for_status()

context['content'] = 'Results received!'

context['current_time'] = datetime.datetime.now()

return context

Since we're using a class-based view, we can't put the decorator directly on the class, so we used a method_decorator and specified dispatch (as the method to be decorated) for the name argument.

The cache in this example sets a timeout (or TTL) of five minutes.

Alternatively, you could set this in your settings like so:

# Cache time to live is 5 minutes

CACHE_TTL = 60 * 5

Then, back in the view:

import datetime

import requests

from django.conf import settings

from django.core.cache.backends.base import DEFAULT_TIMEOUT

from django.utils.decorators import method_decorator

from django.views.decorators.cache import cache_page

from django.views.generic import TemplateView

BASE_URL = 'https://httpbin.org/'

CACHE_TTL = getattr(settings, 'CACHE_TTL', DEFAULT_TIMEOUT)

@method_decorator(cache_page(CACHE_TTL), name='dispatch')

class ApiCalls(TemplateView):

template_name = 'apicalls/home.html'

def get_context_data(self, **kwargs):

context = super().get_context_data(**kwargs)

response = requests.get(f'{BASE_URL}/delay/2')

response.raise_for_status()

context['content'] = 'Results received!'

context['current_time'] = datetime.datetime.now()

return context

Next, let's add a cache backend.

Redis vs Memcached

Memcached and Redis are in-memory, key-value data stores. They are easy to use and optimized for high-performance lookups. You probably won't see much difference in performance or memory usage between the two. That said, Memcached is slightly easier to configure since it's designed for simplicity and ease of use. Redis, on the other hand, has a richer set of features so it has a wide range of use cases beyond caching. For example, it's often used to store user sessions or as message broker in a pub/sub system. Because of its flexibility, unless you're already invested in Memcached, Redis is much better solution.

For more on this, review this Stack Overflow answer.

Next, pick your data store of choice and let's look at how to cache a view.

Option 1: Redis with Django

Download and install Redis.

If you’re on a Mac, we recommend installing Redis with Homebrew:

$ brew install redis

Once installed, in a new terminal window start the Redis server and make sure that it's running on its default port, 6379. The port number will be important when we tell Django how to communicate with Redis.

$ redis-server

For Django to use Redis as a cache backend, we first need to install django-redis.

Add it to the requirements.txt file:

django-redis==5.0.0

Install:

(venv)$ pip install -r requirements.txt

Next, add the custom backend to the settings.py file:

CACHES = {

'default': {

'BACKEND': 'django_redis.cache.RedisCache',

'LOCATION': 'redis://127.0.0.1:6379/1',

'OPTIONS': {

'CLIENT_CLASS': 'django_redis.client.DefaultClient',

}

}

}

Now, when you run the server again, Redis will be used as the cache backend:

(venv)$ python manage.py runserver

With the server up and running, navigate to http://127.0.0.1:8000.

The first request will still take about two seconds. Refresh the page. The page should load almost instantaneously. Take a look at the load time in your terminal. It should be close to zero:

Total time: 0.01s

Curious what the cached data looks like inside of Redis?

Run Redis CLI in interactive mode in a new terminal window:

$ redis-cli

You should see:

127.0.0.1:6379>

Run ping to ensure everything works properly:

127.0.0.1:6379> ping

PONG

Turn back to the settings file. We used Redis database number 1: 'LOCATION': 'redis://127.0.0.1:6379/1',. So, run select 1 to select that database and then run keys * to view all the keys:

127.0.0.1:6379> select 1

OK

127.0.0.1:6379[1]> keys *

1) ":1:views.decorators.cache.cache_header..17abf5259517d604cc9599a00b7385d6.en-us.UTC"

2) ":1:views.decorators.cache.cache_page..GET.17abf5259517d604cc9599a00b7385d6.d41d8cd98f00b204e9800998ecf8427e.en-us.UTC"

We can see that Django put in one header key and one cache_page key.

To view the actual cached data, run the get command with the key as an argument:

127.0.0.1:6379[1]> get ":1:views.decorators.cache.cache_page..GET.17abf5259517d604cc9599a00b7385d6.d41d8cd98f00b204e9800998ecf8427e.en-us.UTC"

Your should see something similar to:

"\x80\x05\x95D\x04\x00\x00\x00\x00\x00\x00\x8c\x18django.template.response\x94\x8c\x10TemplateResponse

\x94\x93\x94)\x81\x94}\x94(\x8c\x05using\x94N\x8c\b_headers\x94}\x94(\x8c\x0ccontent-type\x94\x8c\

x0cContent-Type\x94\x8c\x18text/html; charset=utf-8\x94\x86\x94\x8c\aexpires\x94\x8c\aExpires\x94\x8c\x1d

Fri, 01 May 2020 13:36:59 GMT\x94\x86\x94\x8c\rcache-control\x94\x8c\rCache-Control\x94\x8c\x0

bmax-age=300\x94\x86\x94u\x8c\x11_resource_closers\x94]\x94\x8c\x0e_handler_class\x94N\x8c\acookies

\x94\x8c\x0chttp.cookies\x94\x8c\x0cSimpleCookie\x94\x93\x94)\x81\x94\x8c\x06closed\x94\x89\x8c

\x0e_reason_phrase\x94N\x8c\b_charset\x94N\x8c\n_container\x94]\x94B\xaf\x02\x00\x00

<!DOCTYPE html>\n<html lang=\"en\">\n<head>\n <meta charset=\"UTF-8\">\n <title>Home</title>\n

<link rel=\"stylesheet\" href=\"https://stackpath.bootstrapcdn.com/bootstrap/4.4.1/css/bootstrap.min.css\

"\n integrity=\"sha384-Vkoo8x4CGsO3+Hhxv8T/Q5PaXtkKtu6ug5TOeNV6gBiFeWPGFN9MuhOf23Q9Ifjh\"

crossorigin=\"anonymous\">\n\n</head>\n<body>\n<div class=\"container\">\n <div class=\"pt-3\">\n

<h1>Below is the result of the APICall</h1>\n </div>\n <div class=\"pt-3 pb-3\">\n

<a href=\"/\">\n <button type=\"button\" class=\"btn btn-success\">\n

Get new data\n </button>\n </a>\n </div>\n Results received!<br>\n

13:31:59\n</div>\n</body>\n</html>\x94a\x8c\x0c_is_rendered\x94\x88ub."

Exit the interactive CLI once done:

127.0.0.1:6379[1]> exit

Skip down to the "Performance Tests" section.

Option 2: Memcached with Django

Start by adding pymemcache to the requirements.txt file:

pymemcache==3.5.0

Install the dependencies:

(venv)$ pip install -r requirements.txt

Next, we need to update the settings in core/settings.py to enable the Memcached backend:

CACHES = {

'default': {

'BACKEND': 'django.core.cache.backends.memcached.PyMemcacheCache',

'LOCATION': '127.0.0.1:11211',

}

}

Here, we added the PyMemcacheCache backend and indicated that Memcached should be running on our local machine on localhost (127.0.0.1) port 11211, which is the default port for Memcached.

Next, we need to install and run the Memcached daemon. The easiest way to install it, is via a package manager like APT, YUM, Homebrew, or Chocolatey depending on your operating system:

# linux

$ apt-get install memcached

$ yum install memcached

# mac

$ brew install memcached

# windows

$ choco install memcached

Then, run it in a different terminal on port 11211:

$ memcached -p 11211

# test: telnet localhost 11211

For more information on installation and configuration of Memcached, review the official wiki.

Navigate to http://127.0.0.1:8000 in our browser again. The first request will still take the full two seconds, but all subsequent requests will take advantage of the cache. So, if you refresh or press the "Get new data button", the page should load almost instantly.

What's the execution time look like in your terminal?

Total time: 0.03s

Performance Tests

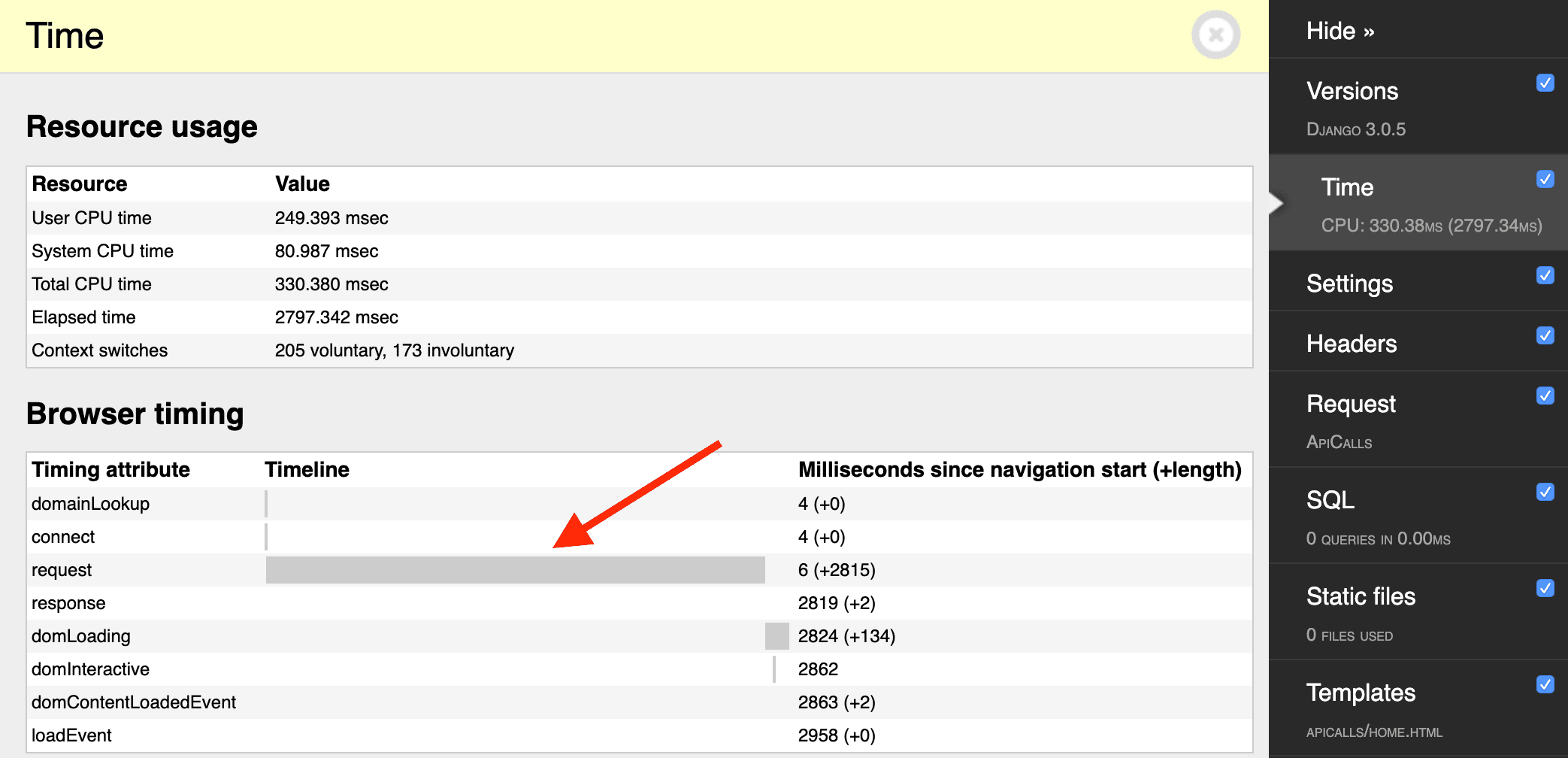

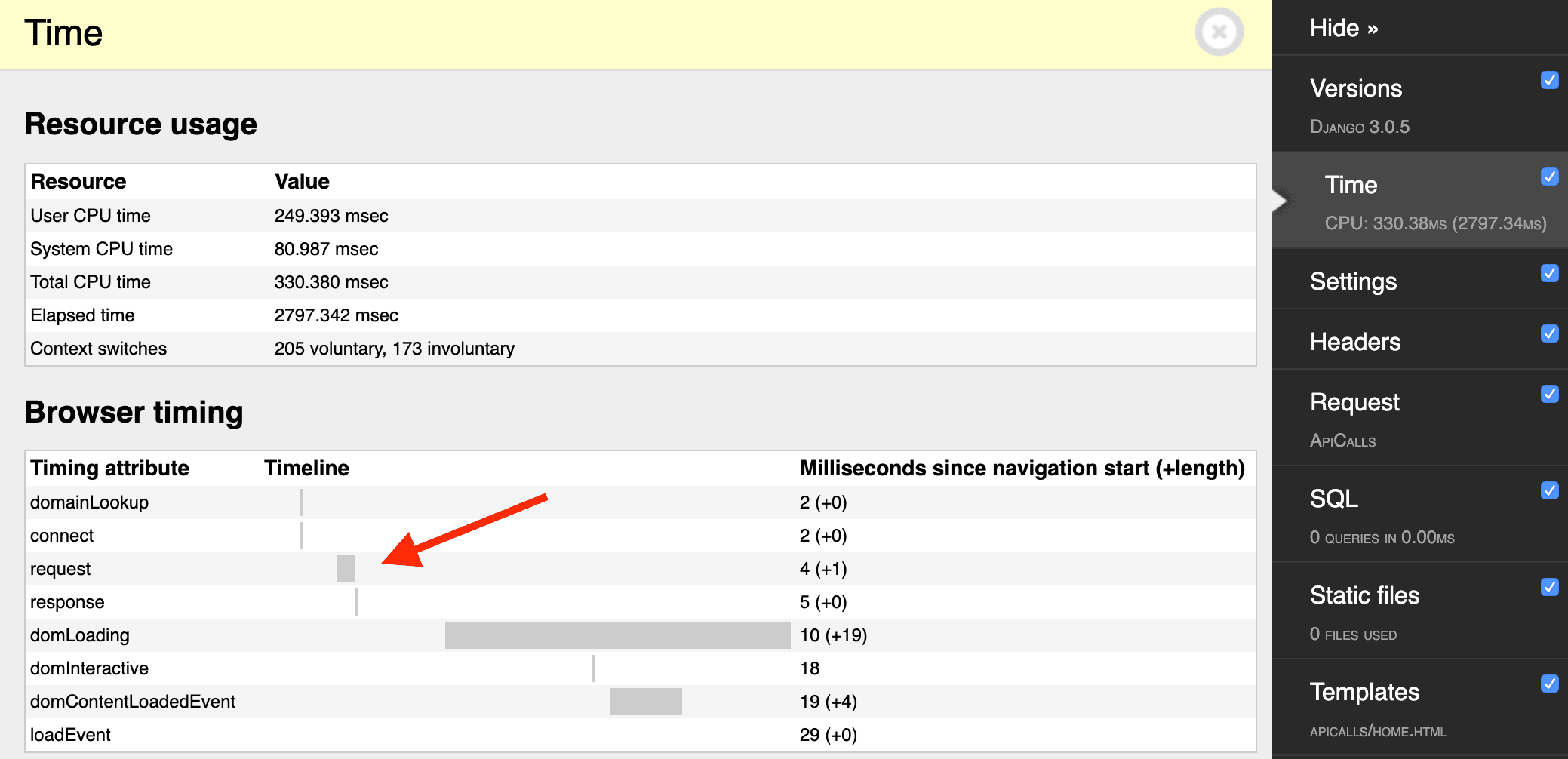

If we look at the time it takes to load the first request vs. the second (cached) request in Django Debug Toolbar, it will look similar to:

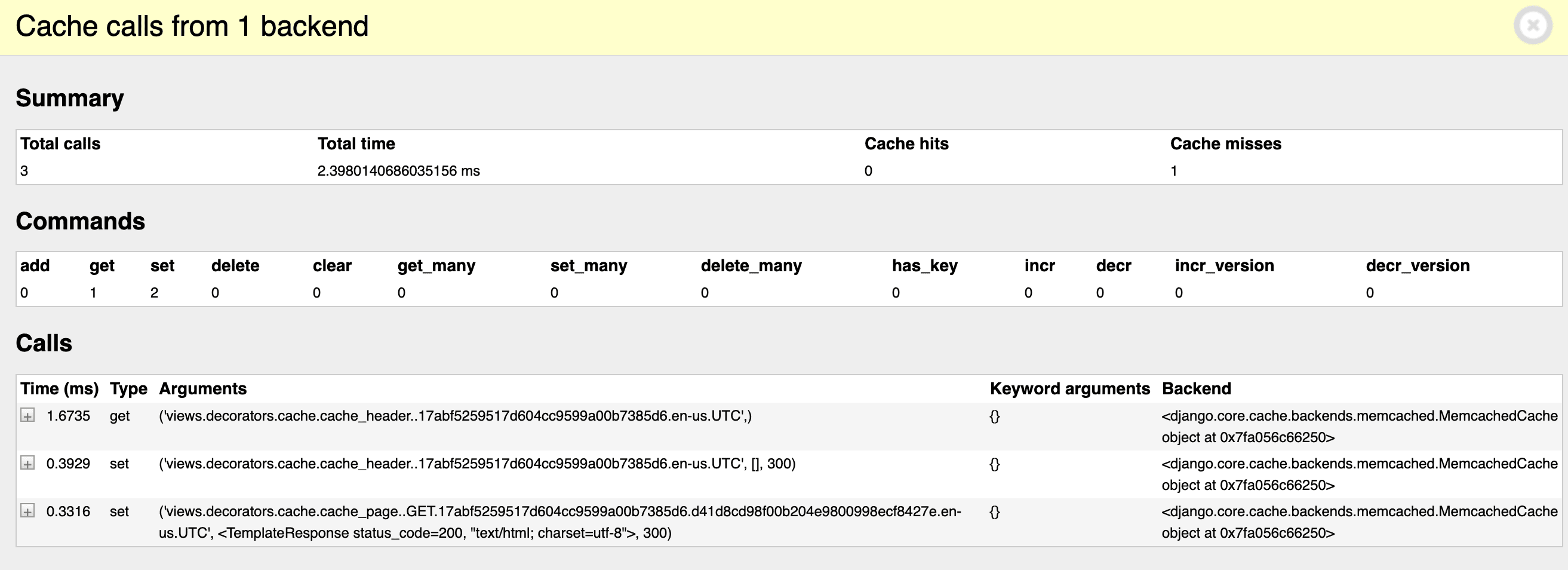

Also in the Debug Toolbar, you can see the cache operations:

Spin Gunicorn back up again and re-run the performance tests:

$ ab -n 100 -c 10 http://127.0.0.1:8000/

What are the new requests per second? It's about 36 on my machine!

Conclusion

In this article, we looked at the different built-in options for caching in Django as well as the different levels of caching available. We also detailed how to cache a view using Django's Per-view cache with both Memcached and Redis.

You can find the final code for both options, Memcached and Redis, in the cache-django-view repo.

--

In general, you'll want to look to caching when page rendering is slow due to network latency from database queries or HTTP calls.

From there, it's highly recommend to use a custom Django cache backend with Redis with a Per-view type. If you need more granularity and control, because not all of the data on the template is the same for all users or parts of the data changes frequently, then jump down to the Template fragment cache or Low-level cache API.

--

Django Caching Articles:

- Caching in Django (this article!)

- Low-Level Cache API in Django

J-O Eriksson

J-O Eriksson