Tests and Code Quality

Part 1, Chapter 5

For the CI/CD pipeline, we'll start with tests and code quality. The first thing you need to create is a Docker image with Python and uv installed. You'll run your tests and code quality jobs inside containers using this image.

At this point, you may be tempted to use images from Docker Hub. Keep in mind that you want your CI/CD pipelines to be fast, to speed up feedback loops, so it's better to use images that only contain the things you need. You don't want to waste time downloading unnecessary dependencies, in other words. You can also bake dependencies specific to your needs in the Docker images, which will save time as well.

Docker Image

Create a new folder called "ci_cd". Inside that folder, create a new folder called "python" and inside that one create a Dockerfile:

ci_cd

└── python

└── Dockerfile

Dockerfile:

FROM python:3.14-slim

COPY --from=ghcr.io/astral-sh/uv:latest /uv /uvx /bin/

RUN mkdir -p /home/gitlab && addgroup gitlab && useradd -d /home/gitlab -g gitlab gitlab && chown gitlab:gitlab /home/gitlab

USER gitlab

WORKDIR /home/gitlab

What's happening here?

- To speed up our builds, we used

python:3.14-slimas a base image. Pythonslim-based images only install the packages required to run Python. - We then copied the

uvbinary from its official Docker image. This is the recommended way to install uv inside Docker -- it's fast and doesn't require cURL or pip. - Finally, we created a user called

gitlab.

Running things as root inside a Docker container is not safe. That's why you added a new user. We called it

gitlabbecause, well, we're using GitLab. The name doesn't matter. It will still work fine if you usemarkormary.

With that, let's move on to the CI/CD configuration.

GitLab CI Config

Create a new file in the project root called .gitlab-ci.yml:

stages:

- docker

cache:

key: ${CI_JOB_NAME}

paths:

- ${CI_PROJECT_DIR}/services/talk_booking/.venv/

build-python-ci-image:

stage: docker

image:

name: gcr.io/kaniko-project/executor:v1.24.0-debug

entrypoint: [""]

before_script:

- echo "{\"auths\":{\"${CI_REGISTRY}\":{\"auth\":\"$(echo -n ${CI_REGISTRY_USER}:${CI_REGISTRY_PASSWORD} | base64 -w 0)\"}}}" > /kaniko/.docker/config.json

script:

- /kaniko/executor

--context "${CI_PROJECT_DIR}/ci_cd/python"

--dockerfile "${CI_PROJECT_DIR}/ci_cd/python/Dockerfile"

--destination "registry.gitlab.com/<your-gitlab-username>/talk-booking:cicd-python3.14-slim"

Make sure to replace

<your-gitlab-username>with your actual GitLab username.

GitLab uses this file to configure a CI/CD pipeline.

A CI pipeline is series of jobs that must be performed in a specific order in order to deliver a new version of software. You can think of jobs as steps. They define what to do. This could be the running of tests, checking for code quality issues, or building a Docker image. Jobs are organized into stages. Stages are used to define when jobs run.

First, we defined a single stage called docker, which will build the Docker image:

stages:

- docker

Stages are logical groupings of jobs. Jobs within a stage are executed in parallel. Stages are executed sequentially in the same order as they're defined.

It's worth noting that you can define variables at the global, stage, and job level, which will be scoped appropriately.

After that, we defined a job aptly named build-python-ci-image:

build-python-ci-image:

stage: docker

image:

name: gcr.io/kaniko-project/executor:v1.24.0-debug

entrypoint: [""]

before_script:

- echo "{\"auths\":{\"${CI_REGISTRY}\":{\"auth\":\"$(echo -n ${CI_REGISTRY_USER}:${CI_REGISTRY_PASSWORD} | base64 -w 0)\"}}}" > /kaniko/.docker/config.json

script:

- /kaniko/executor

--context "${CI_PROJECT_DIR}/ci_cd/python"

--dockerfile "${CI_PROJECT_DIR}/ci_cd/python/Dockerfile"

--destination "registry.gitlab.com/<your-gitlab-username>/talk-booking:cicd-python3.14-slim"

This job uses Kaniko to build and push the Docker image. Kaniko builds Docker images inside containers without requiring a Docker daemon -- it runs entirely in userspace, which means it doesn't need privileged mode and works perfectly on shared runners. The debug variant of the Kaniko image is used because it includes a shell, which is required for GitLab CI to run the before_script.

In before_script, we configure Kaniko's Docker credentials so it can push the built image to GitLab's container registry. The script section then runs the Kaniko executor, pointing it to the Dockerfile context and specifying the destination image tag.

Keep in mind that you should always use Docker images with specific version tags rather than the latest tag since it's likely that something will break after a new version of an image is released.

You can view your Docker registry for your GitLab repo by clicking on Deploy -> Container Registry on the side nav.

Refer to the Keyword reference for the .gitlab-ci.yml file reference from the official docs for more info on configuring the .gitlab-ci.yml file.

Most of the CI/CD SaaS products (GitLab CI/CD, GitHub Actions, CircleCI, TravisCI, to name a few) have similar YAML configuration files for defining pipelines and jobs. You can check out an example GitHub workflow in the Python Project Workflow article.

Add the changes to git, create a new commit, and push your code up to GitLab:

$ git add -A

$ git commit -m 'CI Python docker image'

$ git push -u origin main

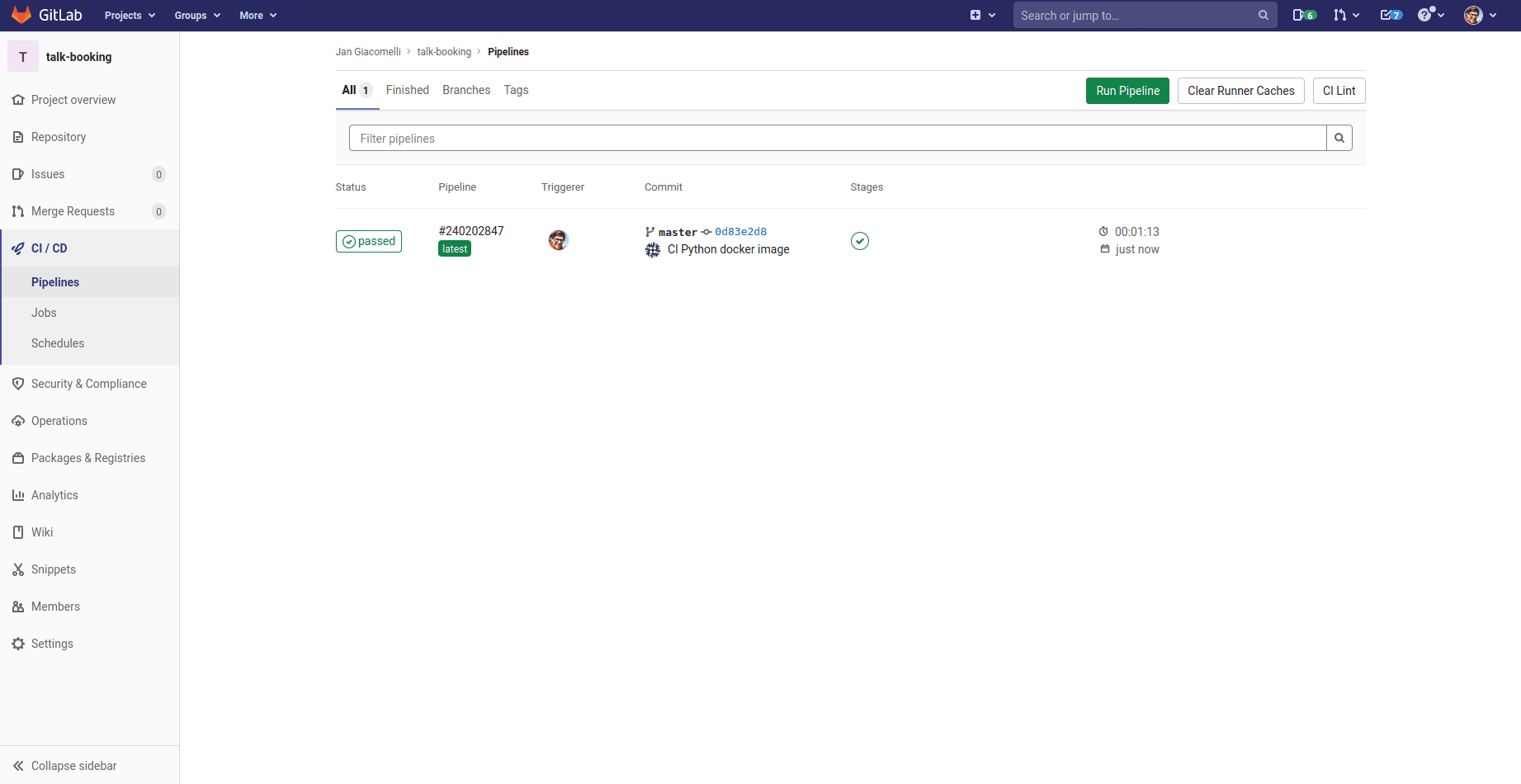

Click on CI/CD on the side nav of your repository. You should see your first pipeline running:

Make sure the pipeline succeeds. You now have an image ready for running tests and code quality checks!

Code Quality Checks

Moving along, let's add our code quality checks to the CI/CD pipeline.

First, add a new stage called test to the .gitlab-ci.yml file, which will be used to run code quality checks along with our automated tests with pytest:

stages:

- docker

- test # new

cache:

key: ${CI_JOB_NAME}

paths:

- ${CI_PROJECT_DIR}/services/talk_booking/.venv/

build-python-ci-image:

stage: docker

image:

name: gcr.io/kaniko-project/executor:v1.24.0-debug

entrypoint: [""]

before_script:

- echo "{\"auths\":{\"${CI_REGISTRY}\":{\"auth\":\"$(echo -n ${CI_REGISTRY_USER}:${CI_REGISTRY_PASSWORD} | base64 -w 0)\"}}}" > /kaniko/.docker/config.json

script:

- /kaniko/executor

--context "${CI_PROJECT_DIR}/ci_cd/python"

--dockerfile "${CI_PROJECT_DIR}/ci_cd/python/Dockerfile"

--destination "registry.gitlab.com/<your-gitlab-username>/talk-booking:cicd-python3.14-slim"

# new

include:

- local: /services/talk_booking/ci-cd.yml

include is used to include external YAML files in your CI/CD pipeline. This helps to break up large YAML config files to increase readability. The path must start with "/" and it's relative to the repository root.

Add a new ci-cd.yml config file to "services/talk_booking":

service-talk-booking-code-quality:

stage: test

image: registry.gitlab.com/<your-gitlab-username>/talk-booking:cicd-python3.14-slim

before_script:

- cd services/talk_booking/

- uv sync

script:

- uv run ruff check .

- uv run ruff format --check .

- uv run safety check

Here, we registered a new job called service-talk-booking-code-quality.

You'll name all of your projects' jobs using this structure:

<project type>-<project name>-<job type>. You're more than welcome to change this structure. Just be consistent with your naming.Take note of the

registry.gitlab.com/<your-gitlab-username>/talk-booking:cicd-python3.14-slimimage that we used to run theservice-talk-booking-code-qualityjob. This is the same image that we built in thebuild-python-ci-imagejob.

Within before_script, we moved to the appropriate directory and installed the Python dependencies with uv. We then defined our code quality checks inside script. If any of the checks exit with a non-zero code, the job will fail. Ruff handles both linting (ruff check) and formatting verification (ruff format --check), replacing several separate tools (Flake8, Black, isort, and Bandit) with a single, much faster tool.

Before moving on, add the Ruff configuration to the [tool.ruff] section in pyproject.toml inside "services/talk_booking":

[tool.ruff]

line-length = 120

exclude = [

".git",

"build",

"dist",

"alembic",

".venv",

]

[tool.ruff.lint]

select = ["E", "F", "W", "I", "S", "C90"]

ignore = []

[tool.ruff.lint.per-file-ignores]

"tests/**" = ["S101"]

[tool.ruff.lint.mccabe]

max-complexity = 10

The select option enables the following rule sets: pycodestyle errors (E) and warnings (W), Pyflakes (F), isort (I), Bandit security checks (S), and McCabe complexity (C90).

The per-file-ignores section suppresses rule S101 in test files. S101 correctly flags the use of assert as a security risk in production code because assert statements can be stripped out with the -O (optimize) flag. So they shouldn't be relied on for checks inside the implementation. However, assert is the standard way to write assertions in pytest, so we disable this rule specifically for files inside the "tests" directory.

Before committing your code, to avoid failed code quality jobs, run:

$ uv run ruff check . --fix

$ uv run ruff format .

Commit and push to the remote:

$ git add -A

$ git commit -m 'Add code quality job'

$ git push -u origin main

If

safety checkfails, update the problematic dependency viauv lock --upgrade-package <package-name>and push your code one more time.

Ensure the pipeline passes.

Tests

Finally, add a job for our automated tests to services/talk_booking/ci-cd.yml:

service-talk-booking-tests:

stage: test

image: registry.gitlab.com/<your-gitlab-username>/talk-booking:cicd-python3.14-slim

before_script:

- cd services/talk_booking/

- uv sync

script:

- uv run python -m pytest --junitxml=report.xml --cov=./ --cov-report=xml tests/unit tests/integration

after_script:

- curl -Os https://uploader.codecov.io/latest/linux/codecov

- chmod +x codecov

- ./codecov

artifacts:

when: always

reports:

junit: services/talk_booking/report.xml

Here, we executed the unit and integrations tests with pytest.

Take note of --junitxml=report.xml. This option generates a JUnit XML report called report.xml, which will be stored in the job's artifacts. Artifacts are files and folders that are preserved between jobs that can be downloaded from the GitLab UI. In essence, JUnit reports make it easier (and faster!) to identify test failures.

We also generated a coverage report, which will be uploaded to Codecov, in after_script, where you can track changes to code coverage.

This job runs in parallel with the code quality job, service-talk-booking-code-quality. It also runs under the same conditions as the code quality job.

You'll need to obtain a token from Codecov before proceeding. Navigate to http://codecov.io/, log in with your GitLab account, and find your repository.

Check out the Quick Start guide for help with getting up and running with Codecov.

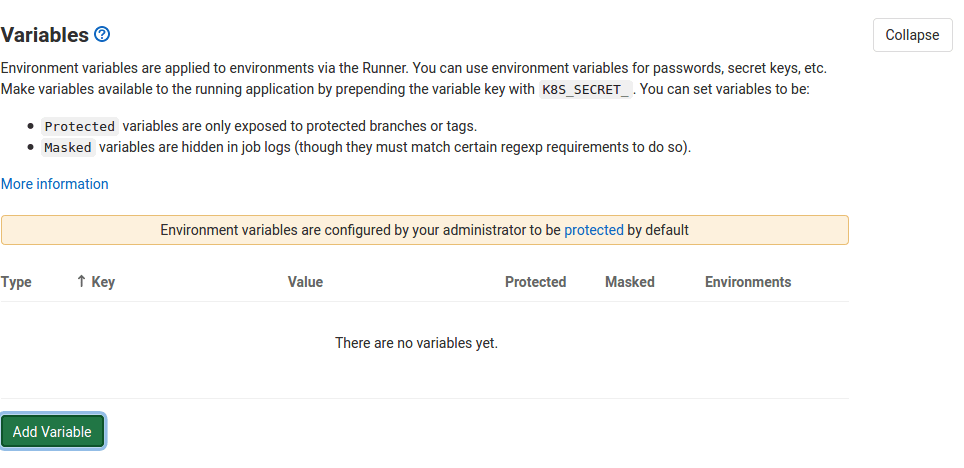

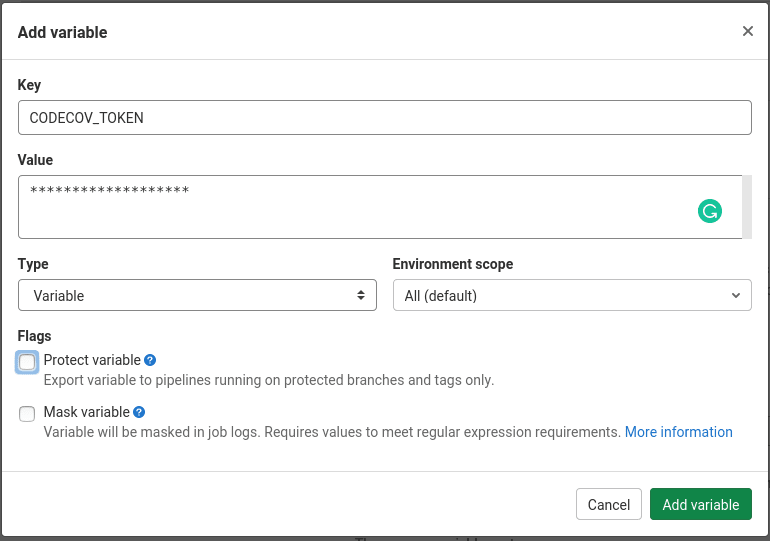

To upload the coverage report, you need to set a CODECOV_TOKEN variable. To do so, open "Settings -> CI/CD":

Click "Add Variable", and set the name to "CODECOV_TOKEN" with the value of your token:

Click "Add Variable" to save it.

CI/CD variables are available to all CI/CD jobs inside the pipeline as environment variables.

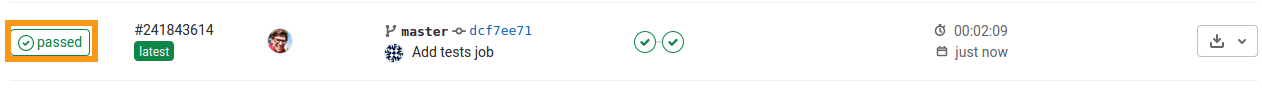

Create a new commit, and push to the remote:

$ git add -A

$ git commit -m 'Add tests job'

$ git push -u origin main

Ensure the pipeline passes.

Controlling When Jobs Run

Thus far, we're running every job for each pipeline run. This is unnecessary. It slows your pipeline, costing you time and money. It's also not great on the environment as a whole. Fortunately, we can use rules to control when jobs should and should not run.

We currently have the following jobs:

build-python-ci-imageservice-talk-booking-code-qualityservice-talk-booking-tests

When should these run?

| Job | Branches | File Changes |

|---|---|---|

build-python-ci-image |

main | ci_cd/python/Dockerfile |

service-talk-booking-code-quality |

main, merge requests against main | services/talk_booking/**/* |

service-talk-booking-tests |

main, merge requests against main | services/talk_booking/**/* |

So, the build-python-ci-image should only run when the branch is main and changes have been made to ci_cd/python/Dockerfile. The other two jobs should only run when a merge requested is created or updated against the main branch when changes occur anywhere inside the "talk_booking" service.

Update the jobs:

build-python-ci-image:

stage: docker

image:

name: gcr.io/kaniko-project/executor:v1.24.0-debug

entrypoint: [""]

before_script:

- echo "{\"auths\":{\"${CI_REGISTRY}\":{\"auth\":\"$(echo -n ${CI_REGISTRY_USER}:${CI_REGISTRY_PASSWORD} | base64 -w 0)\"}}}" > /kaniko/.docker/config.json

script:

- /kaniko/executor

--context "${CI_PROJECT_DIR}/ci_cd/python"

--dockerfile "${CI_PROJECT_DIR}/ci_cd/python/Dockerfile"

--destination "registry.gitlab.com/<your-gitlab-username>/talk-booking:cicd-python3.14-slim"

rules: # new

- if: '$CI_COMMIT_BRANCH == "main"'

changes:

- ci_cd/python/Dockerfile

service-talk-booking-code-quality:

stage: test

image: registry.gitlab.com/<your-gitlab-username>/talk-booking:cicd-python3.14-slim

before_script:

- cd services/talk_booking/

- uv sync

script:

- uv run ruff check .

- uv run ruff format --check .

- uv run safety check

rules: # new

- if: '($CI_COMMIT_BRANCH == "main") || ($CI_PIPELINE_SOURCE == "merge_request_event")'

changes:

- services/talk_booking/**/*

service-talk-booking-tests:

stage: test

image: registry.gitlab.com/<your-gitlab-username>/talk-booking:cicd-python3.14-slim

before_script:

- cd services/talk_booking/

- uv sync

script:

- uv run python -m pytest --junitxml=report.xml --cov=./ --cov-report=xml tests/unit tests/integration

after_script:

- curl -Os https://uploader.codecov.io/latest/linux/codecov

- chmod +x codecov

- ./codecov

artifacts:

when: always

reports:

junit: services/talk_booking/report.xml

rules: # new

- if: '($CI_COMMIT_BRANCH == "main") || ($CI_PIPELINE_SOURCE == "merge_request_event")'

changes:

- services/talk_booking/**/*

**/*at the end means any file in the current directory or subdirectories.

Commit and push your code.

service-talk-booking-code-quality and service-talk-booking-tests should run since we made changes in "services/talk_booking".

Try creating a new branch and pushing your code:

$ git checkout -b test

$ git push origin test

This won't trigger a pipeline since no jobs meet the criteria to run. Nice. Jump back to the main branch.

As you make your way through this course, you may need to comment out the rules section from time to time if you run into errors or just need to force a specific job to run. Make sure to uncomment the section once you fix the issue.

What Have You Done?

It may not seem like a lot of work, but we accomplished a number of important tasks in this chapter.

First, we set up code quality checks to ensure that our code follows a consistent style and is free from any of the known security vulnerabilities. If any of these checks fail, the entire pipeline fails. More importantly, we know we must do something about it. Because it runs early in the pipeline -- future stages and jobs will run after the test stage -- we get feedback as soon as possible.

Next, we added a job to run our tests. Like the quality checks, the automated tests run early in the pipeline. If any test fails, we can respond immediately. We also generated a JUnit report, which is used by GitLab to show the results of your tests in its UI.

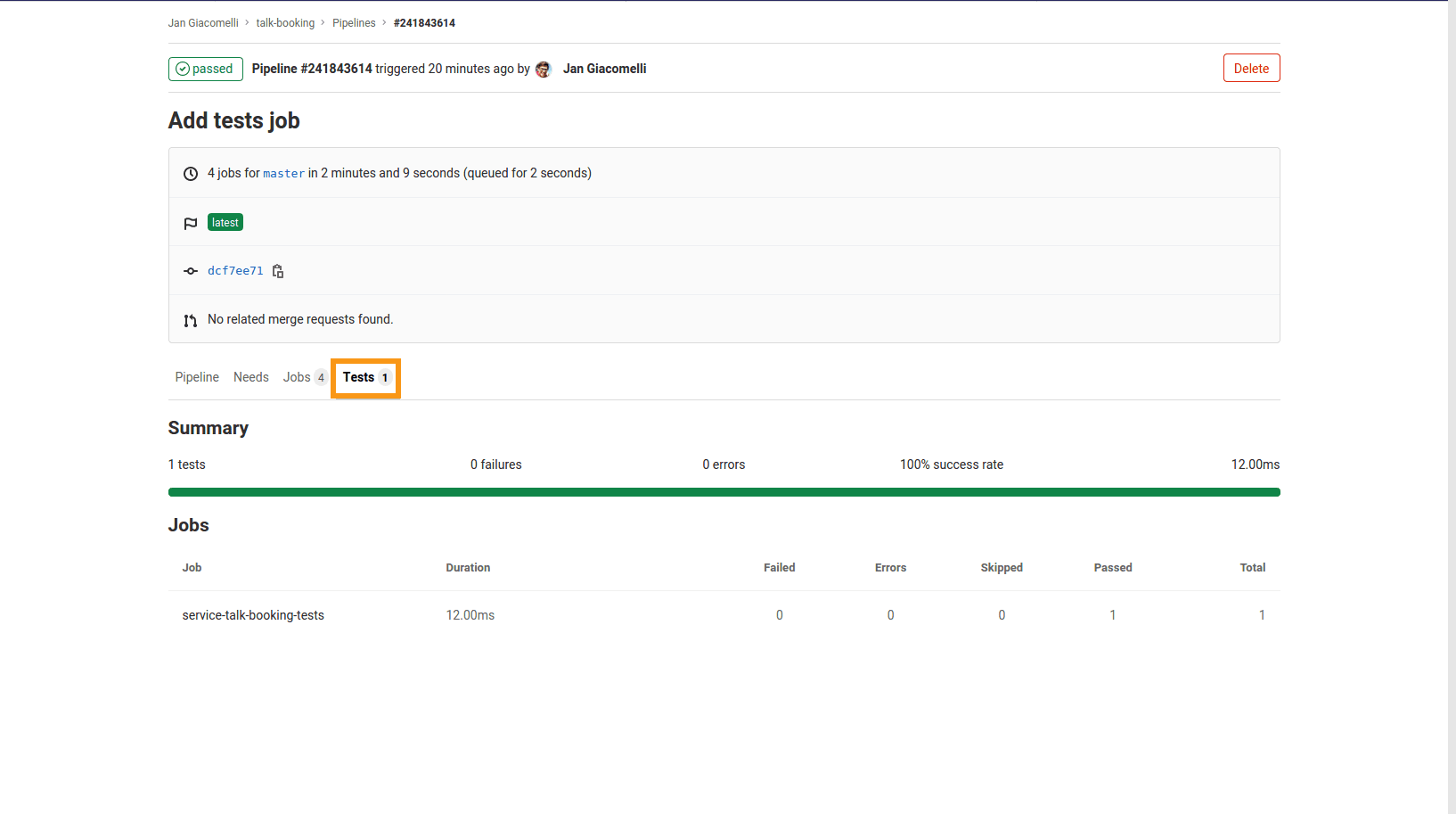

To see that report, click on the "Passed" badge in your last pipeline:

Then, within the pipeline details, open the "Tests" tab:

You should see a list of all jobs that produced test reports. You have the reported total number of tests that failed, produced an error, were skipped, or passed. You can click on a job's name to see a list of all executed tests. Failed tests are colored red, which helps to simplify and speed up feedback loops. Notice a trend yet?

Further, you enabled code coverage tracking via Codecov. Don't worry so much about the percent number. Sure, you want that number to be greater than 70, but it's better to track changes to that number over time. You can compare the main branch with the PR/MR branch. You can see if it unexpectedly drops or increases. You can also see if it's gradually dropping. By analyzing that, you can take the right action. Again, your feedback loop just became richer and faster.

At this point, your project should have the following structure:

├── .gitignore

├── .gitlab-ci.yml

├── ci_cd

│ └── python

│ └── Dockerfile

└── services

└── talk_booking

├── ci-cd.yml

├── uv.lock

├── pyproject.toml

├── tests

│ ├── __init__.py

│ ├── e2e

│ │ └── __init__.py

│ ├── integration

│ │ ├── test_web_app

│ │ │ ├── __init__.py

│ │ │ └── test_main.py

│ └── unit

│ └── __init__.py

└── web_app

├── __init__.py

└── main.py

✓ Mark as Completed